Win the Enterprise Deal You’re Losing in

AI Security Review.

A fractional AI security team augmented with our own platform — 65+ years of CISO and enterprise architecture expertise, paired with continuous adversarial AI testing. We become your ongoing AI security function — through this deal, and every one after.

Three questions we ask every founder who finds us.

Most can’t answer them.

What do you do to protect your clients’ data and your IP against leaks — today, not someday?

Have you tried to trick your own system into giving you information it shouldn’t?

Do you have a pre-flight checklist before pushing AI to production?

If you paused on any of those, the security team reviewing your deal is going to pause a lot longer.

We worked with an experienced engineering team. Senior people. Good code. Tested product.

Within 24 hours, we proved an attacker could exfiltrate their customers’ data and their own IP from their test systems.

Not because they were careless. Because AI security is a different discipline than application security — and most teams haven’t been trained to exploit their own AI the way an adversary will.

That’s not a one-off finding. That’s the pattern.

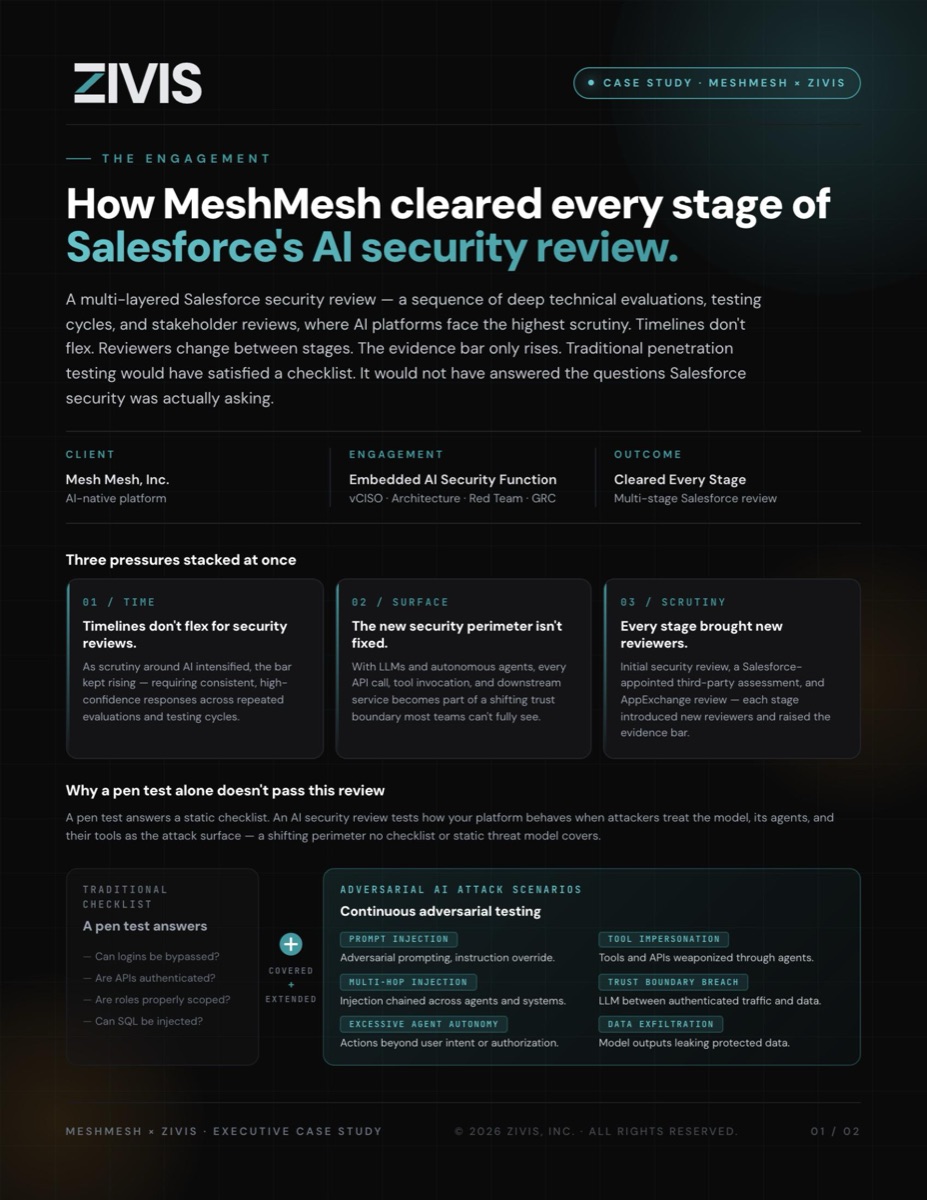

How MeshMesh cleared every stage of Salesforce’s AI security review.

A multi-layered Salesforce security review — deep technical evaluations, testing cycles, and stakeholder reviews where AI platforms face the highest scrutiny. Timelines don’t flex. Reviewers change between stages. The evidence bar only rises.

A traditional pen test would have satisfied a checklist. It would not have answered the questions Salesforce security was actually asking.

“We didn’t deliver a test. We became their security function — vCISO in the room, architecture review before features shipped, adversarial AI testing after, GRC evidence in lockstep with remediation.”

— Jake Miller, Co-Founder & CEO, ZIVIS

Most AI security help arrives in one of four packages.

Automated scanners

Noisy reports without judgment or context.

Boutique pen test firms

Depth at a point in time, then they leave.

vCISO firms

Strategic leadership without hands-on offensive capability.

GRC firms

Compliance paperwork without technical engagement.

None of them clears a multi-stage enterprise security review on their own.

ZIVIS operates as a single embedded team that does all four at once.

The gap where those four normally fail to overlap is where enterprise procurement stalls. It’s also where we work.

ZIVIS is not a platform you buy.

It’s a security team you hire.

The team brings 65+ years of combined CISO and enterprise architecture experience — Jim Goldman, formerly Salesforce’s first VP of Global Security GRC, and Jake Miller, who has spent 25 years engineering complex enterprise systems and still writes the code that finds the vulnerabilities nobody else is looking for.

We use a proprietary platform we built ourselves.

Not open-source tools anyone else can run. The platform extends what the team can cover. The team makes the platform’s output actionable.

tells you what’s wrong.

tells you what’s wrong, what it means for your deal, and what to do about it — in the language the reviewer on the other side of the CISO call needs to hear.

From diagnosis to deal cleared.

What 30 days with ZIVIS looks like — and what comes after.

Diagnosis

Days 1–14We run recon, threat modeling, and adversarial testing across your AI surface. OWASP Web, API, LLM, and Agentic AI — executed in parallel, not sequentially. Findings flow to you as we find them, not in a PDF at the end.

Treatment Plan

Days 15–30A bespoke, data-informed plan built from what we found and what your specific buyer cares about. Not a generic checklist. Not recycled from the last customer. Written for your system, your deal, your review cycle.

Remediation and Retest

OngoingEvery finding tracked to verified closure. Retest evidence captured for reviewers who will ask for it. Our proprietary platform runs continuously — so what we prove today is still true when the reviewer asks next month.

In the Room

When the call landsWhen the buyer’s CISO wants a call, Jim shows up. Not a junior consultant. Not a scheduler. Jim — with the credential that the reviewer on the other side already recognizes.

And we don’t stop.

Every new model, every new agent, every new tool wired into your AI surface — Phase 01 begins again. The team is already in flight. The platform is still running. What you prove today stays true tomorrow because we never stopped looking.

Priority onboarding for teams with an active enterprise security review in flight.

Book 30 minutes with Jim and Jake

One CISO with 30+ years across enterprise security. One offensive engineer with 25 years finding what scanners miss. One conversation about the deal at risk.